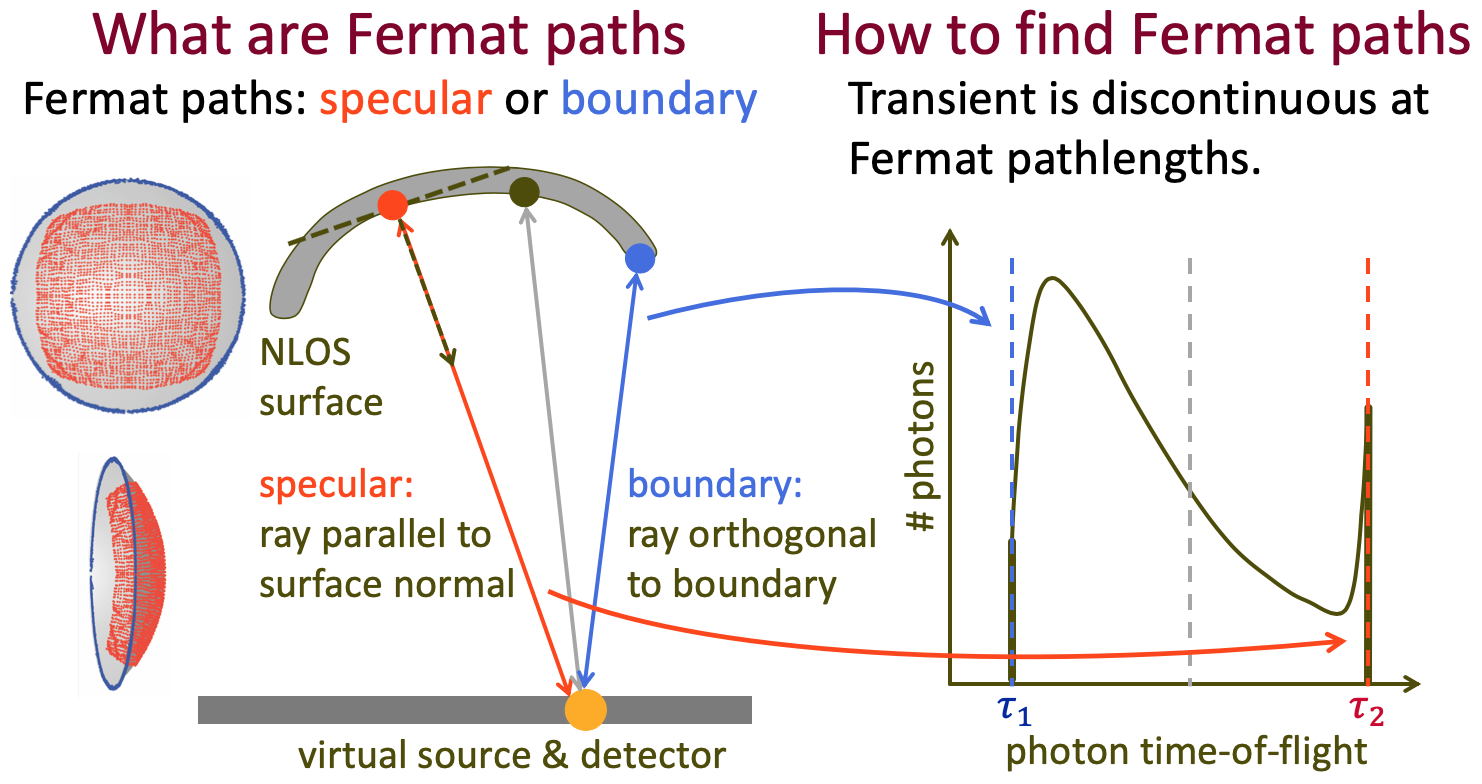

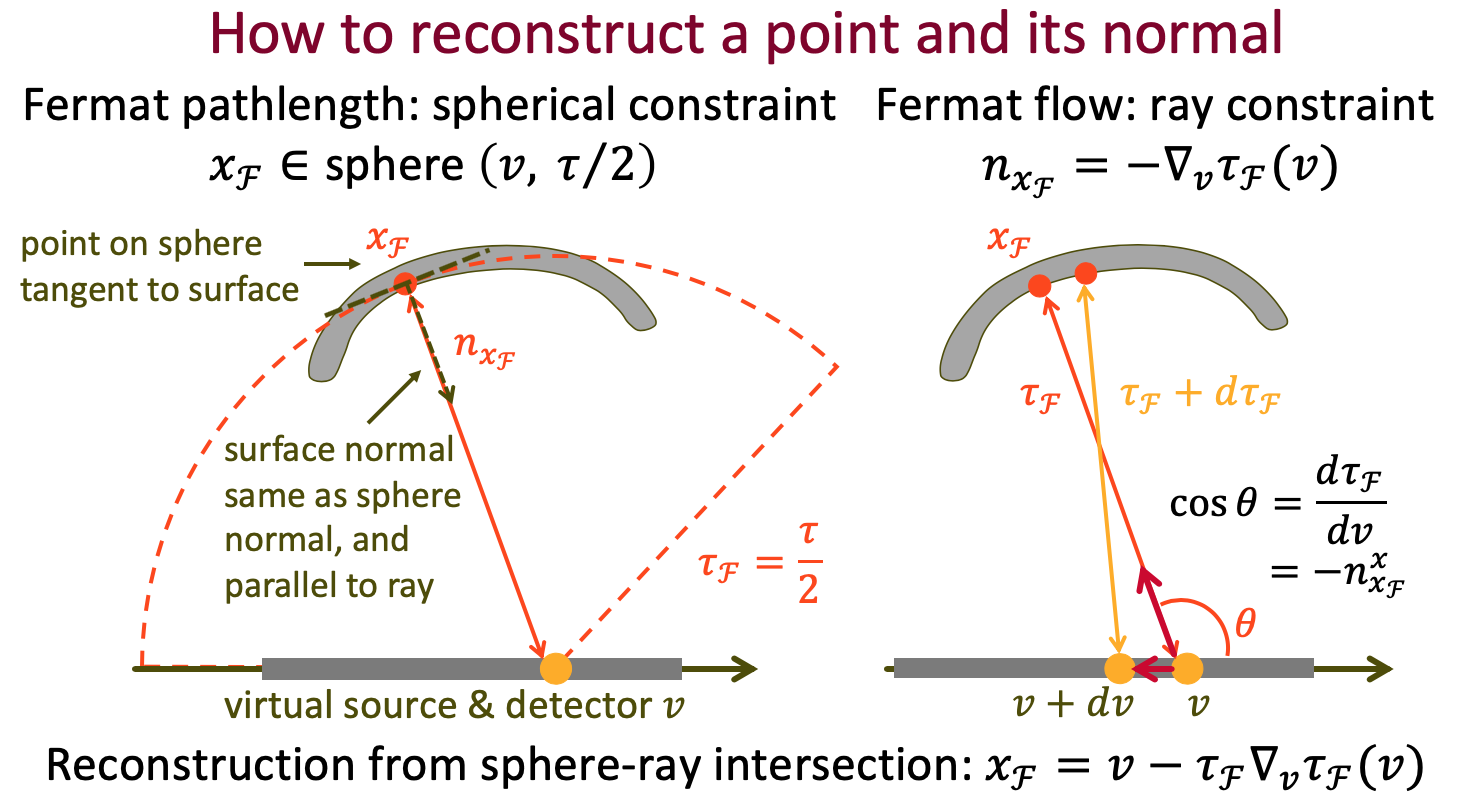

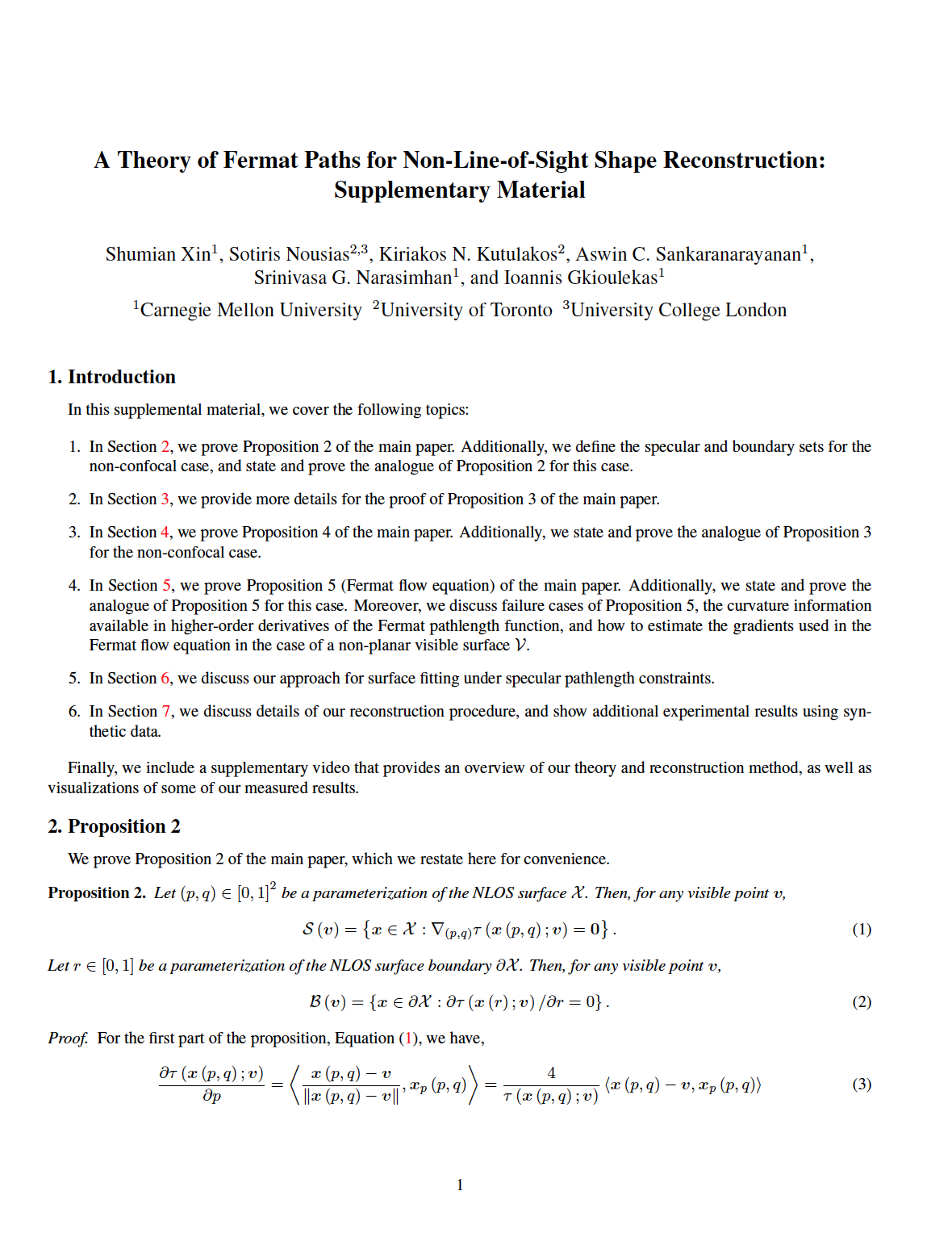

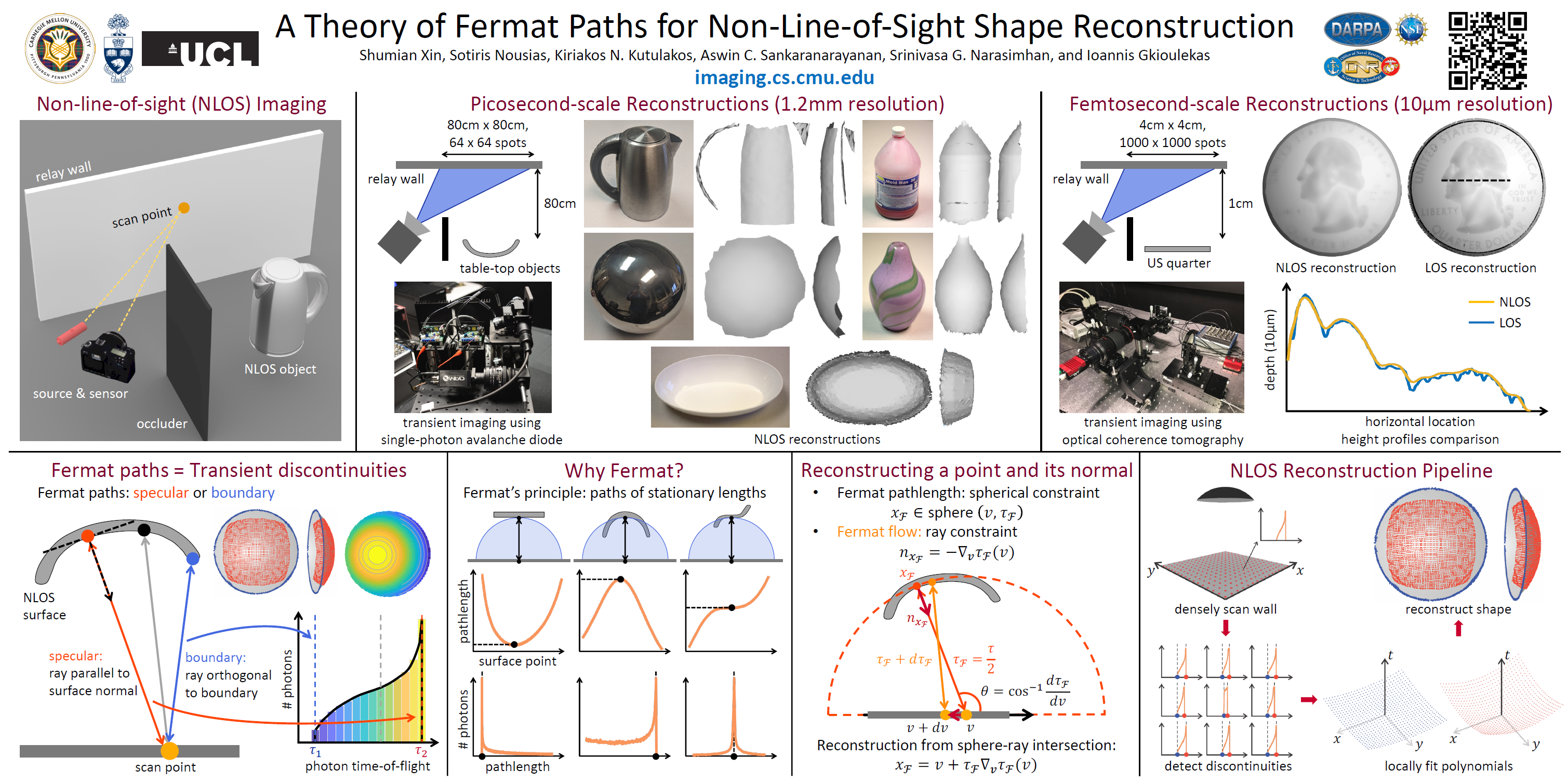

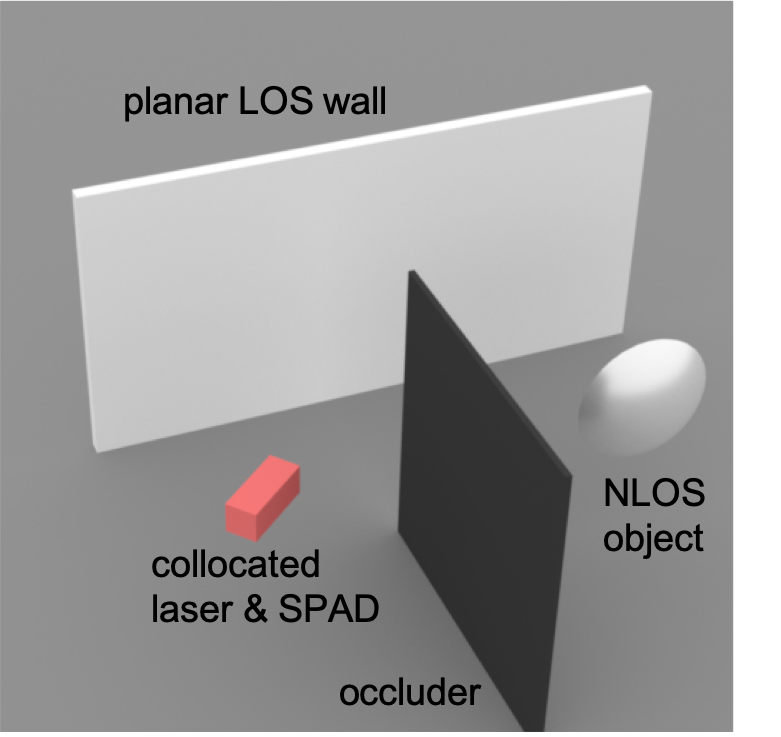

When talking about Non-Line-of-Sight (NLOS) imaging, we mainly refer to two scenarios. The first scenario consists of an active light source and a sensor, which are collocated, and are looking at some diffuse surfaces, e.g. a wall. The scene also contains an object that is outside the field-of-view of the sensor. We then use the measurements captured by the sensor to reconstruct this object. We refer to this senario as "looing around the corner".

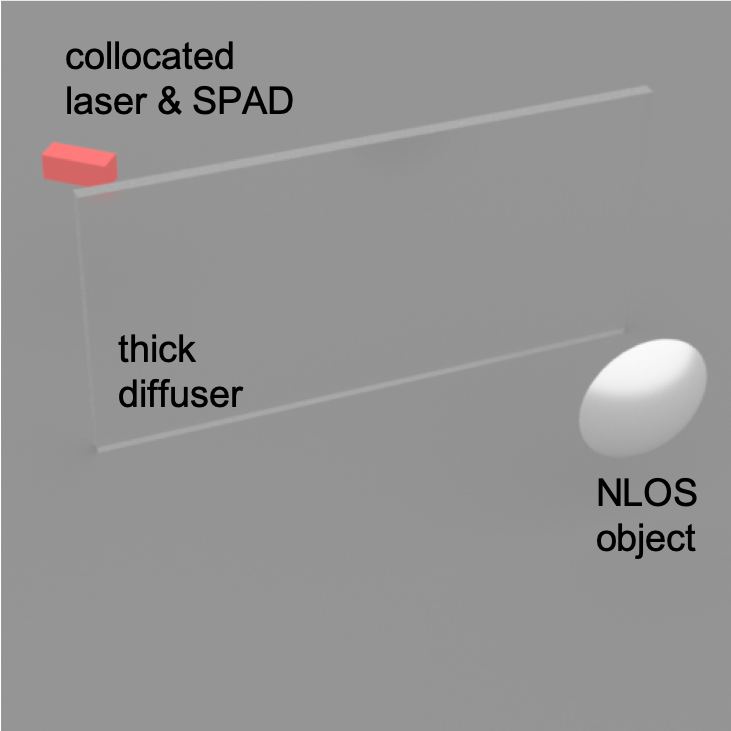

Alternatively, in the second scenerio, the object maybe within the field-of-view of the sensor, but are occuluded by some thick diffuser, e.g. a piece of paper. We refer to this scenario as "looking through a diffuser".

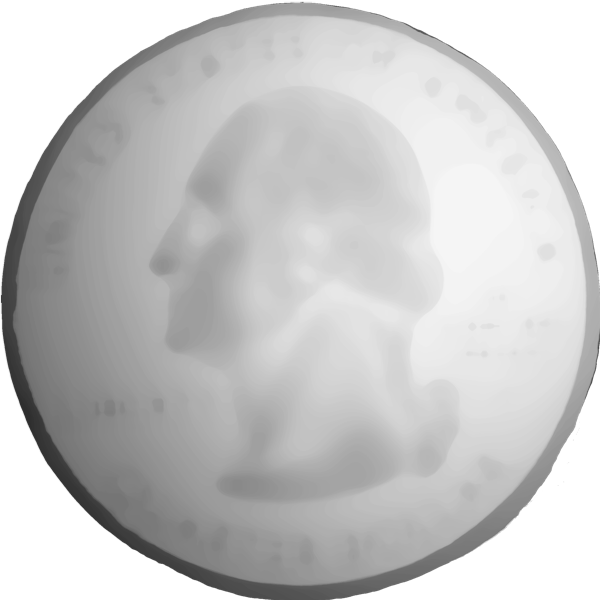

looking around the corner